As AI becomes more deeply woven into everyday systems and decisions, having clear oversight becomes increasingly important. This is where the idea of Responsible AI takes center stage. Before exploring how organizations can protect users and maintain trust, it’s helpful to understand exactly what Responsible AI is.

What Is Responsible AI?

Responsible AI refers to the principles, processes, and governance frameworks that ensure artificial intelligence systems are ethical, transparent, safe, unbiased, secure, and aligned with human well-being.

It is about designing and deploying AI so that its benefits are maximized while its risks such as discrimination, misinformation, or misuse are minimized. Responsible AI is not only a technical practice; it is a business imperative.

Why Responsible AI Matters Today

Organizations that integrate responsible AI practices often achieve greater operational efficiency and enhanced customer trust. However, despite these clear advantages, only a limited number have fully implemented such practices. Addressing this gap presents a significant opportunity to accelerate innovation and reinforce confidence in AI technologies.

To better understand the factors contributing to this gap, it is useful to examine how organizations typically progress along their responsible AI journey.

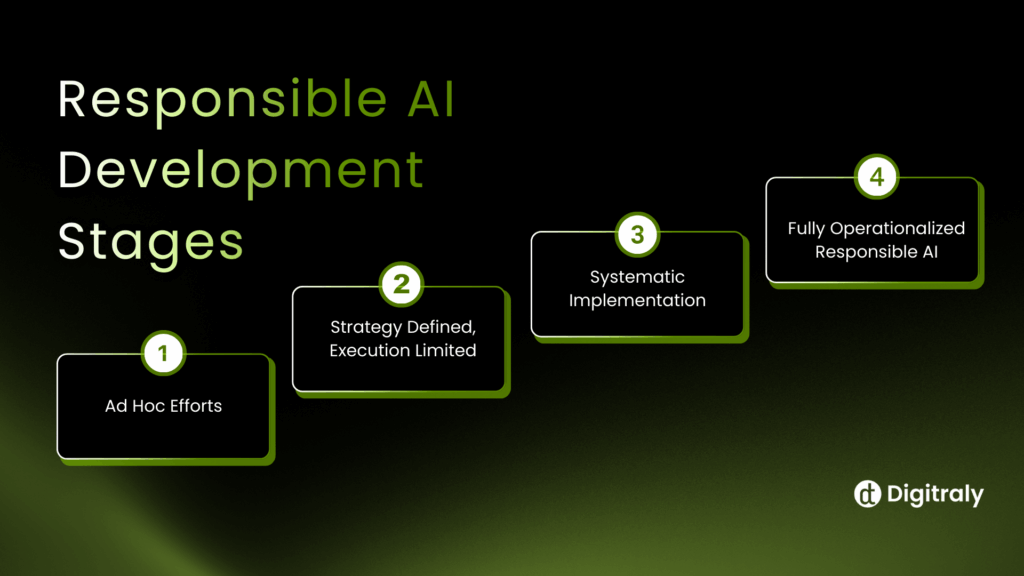

Understanding the Responsible AI Maturity Scale

Most companies advance through four stages as they work toward fully responsible and trustworthy AI:

Stage 1: Ad Hoc Efforts

Early responsible AI activities are reactive and inconsistent, with no formal policies or standards guiding AI development.

Stage 2: Strategy Defined, Execution Limited

The organization establishes a responsible AI framework, but real-world adoption is still uneven and early in maturity.

Stage 3: Systematic Implementation

Responsible AI processes are more widely practiced, though organizations still lack proactive approaches to identifying and managing emerging risks.

Stage 4: Fully Operationalized Responsible AI

Responsible AI is embedded across the entire lifecycle, supported by proactive risk management, cross-functional collaboration, and engagement with ecosystem partners and stakeholders.

A recent 2025 survey found that fewer than 1% of companies have reached Stage 4, underscoring how much room there is for growth. Moving up the maturity scale not only reduces risk—it transforms responsible AI into a sustainable competitive advantage.

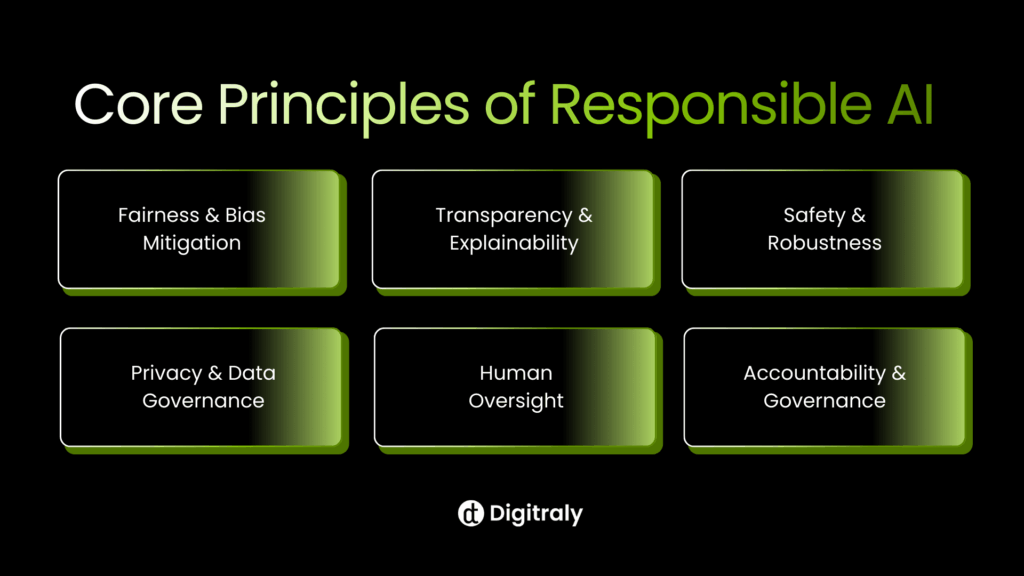

Core Principles of Responsible AI

1. Fairness and Bias Mitigation

AI must not reinforce or amplify discrimination.

This requires:

- Diverse training data

- Bias testing

- Continual model evaluation

- Equal outcomes across demographic groups

2. Transparency and Explainability

Users should understand:

- What the AI does

- Why it made a decision

- What data influenced the outcome

Explainable AI is crucial for high-stakes domains like healthcare, finance, and public services.

3. Safety and Robustness

AI systems should remain safe and predictable even in:

- Unexpected scenarios

- Adversarial attacks

- Shifting data environments

4. Privacy and Data Governance

Responsible AI protects user data through:

- Encryption

- Anonymization

- Consent management

- Minimal data retention

5. Human Oversight

AI augments, not replaces, human decision-making.

- Clear escalation pathways are necessary.

- Defined review checkpoints ensure evaluation.

- Robust override mechanisms maintain accountability.

6. Accountability and Governance

Organizations must define:

- Ownership of AI systems

- Ethical review boards

- Risk assessment procedures

- Model documentation

Responsible AI becomes part of the organizational culture.

How Organizations Can Implement Responsible AI

1. Establish Clear AI Governance Frameworks

Create internal guidelines, roles, and review committees responsible for oversight.

2. Conduct Risk Assessments Before Deployment

Review:

- Data sources

- Model risks

- Potential misuse scenarios

Impact on users

3. Use Ethical Data Practices

Ensure data is collected legally, ethically, and without hidden biases.

4. Monitor AI Systems Continuously

Post-deployment monitoring detects:

- Bias creep

- Model drift

- Unexpected behaviour

5. Educate Teams and Build a Responsible AI Culture

Training programs help technical and non-technical teams understand policies and risks.

6. Collaborate With Diverse Stakeholders

Include perspectives from:

- Engineers

- Policymakers

- Legal experts

- Users

- Ethics researchers

Diverse input reduces blind spots.

The Future of Responsible AI

As generative AI becomes mainstream, the future will require:

- More human-AI collaboration

- Stronger content authenticity measures

- Tools to detect misinformation

- Auditable and certified AI systems

- Global alignment on regulations

Responsible AI will ultimately serve as the backbone of safe, trustworthy, and scalable innovation across industries.

If your organization is ready to move from intention to implementation, Digitraly can help you build AI systems that are both high-performing and responsible. Discover our AI development services now!